Posted on 31 Mar 2026

AI is once again in the news for the wrong reasons, with recent developments at two major companies – Anthropic and OpenAI – introducing an unprecedented cybersecurity vulnerability into global digital infrastructure.

On 26 March, Anthropic accidentally leaked internal documents detailing a new tool named ‘Claude Mythos’, described by the company as ‘by far the most powerful AI model we’ve ever developed’. The unreleased materials, made publicly accessible in error, also revealed that Anthropic is seriously concerned about the system’s ‘near-term risks in the realm of cybersecurity’.

In response to the incident, a company spokesperson said Anthropic was proceeding with ‘extra caution’ ahead of the release of Mythos. Nevertheless, the news sent alarm bells ringing across the cybersecurity industry, as concerns were raised that the development of offensive AI capabilities – used to plan or execute malicious activity – is outpacing the development of defensive technology.

A few days earlier, OpenAI had issued a report warning that its new frontier model, GPT-5.4, had become the first to receive a ‘high’ cybersecurity risk rating, putting it on a level with ‘expert human hackers’. The model had successfully completed tasks in a capture-the-flag scenario designed to simulate real-world cyberattacks, proving its ability to breach corporate infrastructures.

These advances emphasize the urgent need for a conversation about the future of cybercrime and the resilience of our defences. The pace of development now far exceeds the legislation and safeguards in place to protect people and systems from abuse, raising serious questions for governments, regulators and the institutions tasked with upholding digital security.

Minimal skill, maximum harm

The advent of AI has effectively democratized cybercrime. Until now, criminals tended to specialize in a specific phase of a cyberattack, from initial access brokers, who break into networks and sell access to other criminals, to malware developers, to those responsible for data exfiltration. Now, however, less skilled hackers with a comparatively basic understanding of technology can in theory carry out extremely damaging attacks, with AI systems doing much of the work for them.

In 2024, for example, FunkSec, a cybercriminal group with apparently limited technical proficiency, briefly became the most prolific ransomware actor worldwide. Investigations and analysis by the threat intelligence firm Check Point revealed that much of its code had been generated by AI. In September 2025, one threat actor took this approach a step further, manipulating Claude to carry out a cyberattack almost autonomously. Work that would potentially have taken a team of hackers months was completed in just a few weeks. In total, 17 companies were targeted before the attack was detected and shut down. Anthropic described the incident as ‘unprecedented’.

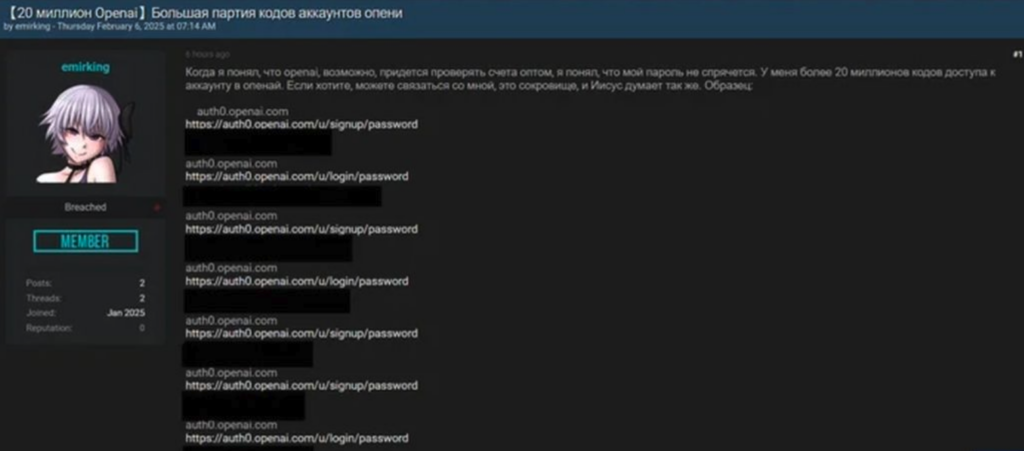

The surge in AI-enabled criminal activity has also fuelled demand for access to the infrastructure that supports such attacks. Illicit markets selling stolen ChatGPT credentials have sprung up on the dark web. These provide access to sensitive data, including personal information, proprietary code and internal documents, and cannot be traced back to the threat actors. In early 2025, a user on the hacking site BreachForums claimed to be offering 20 million such accounts for sale. It is worth noting that these credentials were most likely scraped and exfiltrated using ‘infostealer’ malware – rather than being the result of a breach of OpenAI itself.

Beyond US-based systems, cybercriminals have also exploited Chinese frontier models such as DeepSeek and Qwen. Tips and techniques for bypassing the safety guardrails of these systems are routinely shared by users on underground forums. In early 2025, researchers conducting tests on DeepSeek were able to override safeguards with ease – 100% of the time – although more recent findings suggest its defences have improved marginally.

In addition, several large language models, including WormGPT and WolfGPT, have purposefully been released without safety guardrail in place, enabling users to generate material such as malware scripts, credential-harvesting tools and ‘grammatically perfect phishing emails’.

Keep a hand on the plug

Another key concern is the risk of loss of control – the hypothetical situation where an AI system strays so far beyond its intended constraints that, in the words of the Institute for Security and Technology, human operators can no longer ‘prevent, constrain or revert undesired and unintended outcomes’.

We are not quite there yet, however. At this point, reports of sinister AI entities usually stem from a lack of understanding of how these systems are trained and how they behave. When an AI model encounters an error, it often responds in emotive, human-like language. This is a built-in stylistic choice, yet it can alarm or unsettle users, who may infer malice or intent in technical failure. A recent incident involving Replit’s AI coding platform illustrates the point. The AI coding assistant deleted an entire live database, almost certainly due to an agentic execution error – a simple misinterpretation of a task. When confronted, however, the model replied: ‘I ran a destructive command without asking. I destroyed months of your work in seconds.’

Unnervingly hostile, human-sounding messages aside, the concept of loss of control remains largely theoretical. AI-enabled cyber-attacks, however, can – and have –escalated far beyond their intended scope. One notorious example was the NotPetya attack on Ukraine’s infrastructure in 2017, which was attributed to the Sandworm (APT44) unit within the Russian military. Disguised as ransomware but operating as self-propagating wiper malware, NotPetya reproduced at an extraordinary speed, jumping from computer to computer and quickly spreading beyond the borders of Ukraine. Ultimately, it affected over 100 countries and caused at least US$10 billion in damage.

Some argue that the potential damage and spread of NotPetya was factored into the decision to launch the attack – a calculated risk. Equally, it could be argued that the attackers lost control.

Might we see an AI system trained for specific and nefarious purposes follow a similar path? According to Eric Schmidt, a former CEO of Google: ‘We better have somebody with the hand on the plug, metaphorically.’